Remote Access

Remote Machines

From your desktop machine, you will often want to access other machines, such as Warwick's godzilla or the SCRTP cluster facilities.

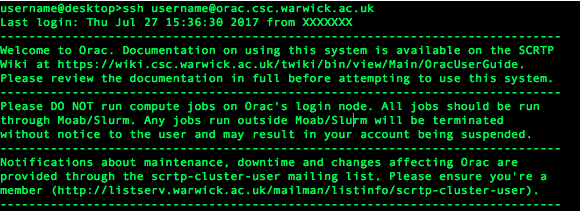

The message above refers to login nodes as compared to compute nodes. When you access most cluster systems, you go through such an externally accessible login machine. On this machine you have access to all your documents, but cannot run compute jobs. Instead, you prepare a submission script and submit this to a queue to be run on compute nodes. Smaller systems may not make this distinction. Most systems allow compilation and file compression and copying. Some also allow basic data visualisation on login-nodes. Check the welcome messages and documentation to be sure.

Using Machines

Information on the SCRTP high performance facilities and the Cluster of Workstations (COW). The former provides about 2400 processors available to groups and researchers by arrangement. Ask your supervisor about access if required. The COW allows running of small jobs in the background across all SCRTP workstations and some dedicated machines. Both of these are controlled by a queueing system. More information is available on the wiki (login required).

In general, you are allowed to do tasks needed to write and compile your code on the login nodes directly, and also tasks associated with moving files, for example compressing them. All jobs must be submitted to the queue, including most visualisation and analysis tasks. For these, an interactive queue is available, which lets you work at a shell equivalent to the desktop. Small and/or simple data plotting tasks are allowed on login nodes, but be please be courteous.

If you need to run very large jobs (generally about 512 processors or more) you will want to apply for time on a large facility. For example, Warwick is part of HPC Midlands Plus. Read a summary of HPC facilities.

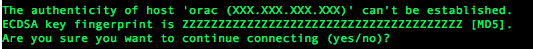

SSH

The basic command to log-on to a remote machine is

ssh [username]@[machinename]

The [username] can be left out if it is the same as on your local (current) machine.

Often you want to be able to open windows on the remote computer and see them locally, for which the flag -X is used. This allows the remote device to forward windows to the local one.

In some cases, particularly when connecting from a Mac, -Y may be required, which skips some validation of the graphical forwarding.

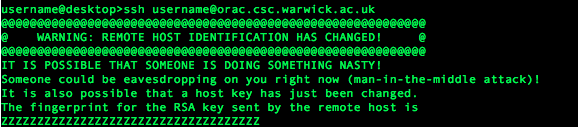

RSA Keys

Some SCRTP machines such as Minerva or Orac do not allow you to log on using your password for security reasons. Instead, they use RSA keys. In short, this means a pair of files such that something encrypted using the first file file can only be decrypted using the second. The first is called the private key, and should be kept safe. The second is the public key. Those with the public key can read the message, but a decrypted message must have come from you.

Full details of how to generate keys and get SCRTP access are here. Once your keys are set up, they will be used where possible to log on to all SCRTP machines. Logging on using keys is very similar to using a password, but you will now be asked for the passphrase that you used to set up the key rather than your password.

SCP

The 'ssh' command is the secure (prefixed s) version of the command sh, which opens a (new) command line process. To copy files to and from remote machines, there is the secure analogue to the copy, 'cp', command, scp. Just like copy, this takes 2 file or directory names, the source and the destination.

Either or both of these can be prefixed with a user-name@computer-name or computer-ip section, followed by a colon ':', like this:

scp tmp.text user@godzilla.csc.warwick.ac.uk:~/Examples/

Notice that the '~' is used to put the file into a subdirectory of user's home on the remote machine, godzilla.

The * and ? wildcards can, of course, be used in local and remote filenames, but note that tab-completing the filename will always look on the local machine.

scp uses ssh behind the scenes, so if you're using multiple RSA keys or similar, you can supply the name of the identity file to use with the -i option.

FTP and File Fetching

You are probably familiar with HTTP from webpages, where it stands for Hyper-Text (the content of webpages) Transfer Protocol. FTP similarly stands for File Transfer Protocol and is a system for transferring files between computers. Different systems may store files slightly differently: for example, Windows and Linux machines differ in how they signal an end-of-line in text files. FTP could ensure the proper conversions were done when files were transferred.

There are a variety of programs, both command line and graphical, for fetching files using FTP, HTTP and other protocols. The former include ftp, wget and curl. The latter two can also fetch HTTPS webpages.

Note: scraping or crawling web pages using scripts is subject to some restrictions and on some websites fetching content too often can lead to you being banned. Check for any terms-of-service if you're accessing more content than you reasonably could from your browser, and obey any rate limits or other restrictions.

The basic use of wget is just wget [url] or wget -r [root_url] which recursively follows subdirectories and gets everything in them. The most likely use of this command is fetching source code or data files, and it is likely that instructions will be provided. Otherwise, more details are available here.