Gihan Mallikarachchige's project on diversity in mathematics

Introduction

As the 21st century rolls on, technology is embedded into everything-including matters of life and death. Advances in medical technology, for example in cancer imaging, promises to identify diseases faster and enure healthcare systems can respond rapidly to crisis.

As the 2019 Coronavirus pandemic evidenced, this needed more than ever as the world is projected to become older and hotter. However, are these technologies equal to all? Do they give equal outcomes to all patients? The medical statistics used to create and test these technologies reveal that this is no the case and that, we should be more careful in integrating technology into matters of life and death.

Is existing medicine fair to all?

Pulse oximeters are devices attached to the end of a finger that use the absorption light to measure blood oxygen levels. Since they were invented in the 1970s, they've been widely used across the medical world for their ease of access as they don't require any injections. Their use was highlighted especially during the 2020 Coronavirus Pandemic where they were vital in measuring whether patients had sufficient oxygen given to them. However, the accuracy of Pulse Oximeters, particularly among ethnic minorities, never brought to question in their nearly 50 years of use. A group of researchers at the University of Michigan during the height of the pandemic finally decided to answer this question.

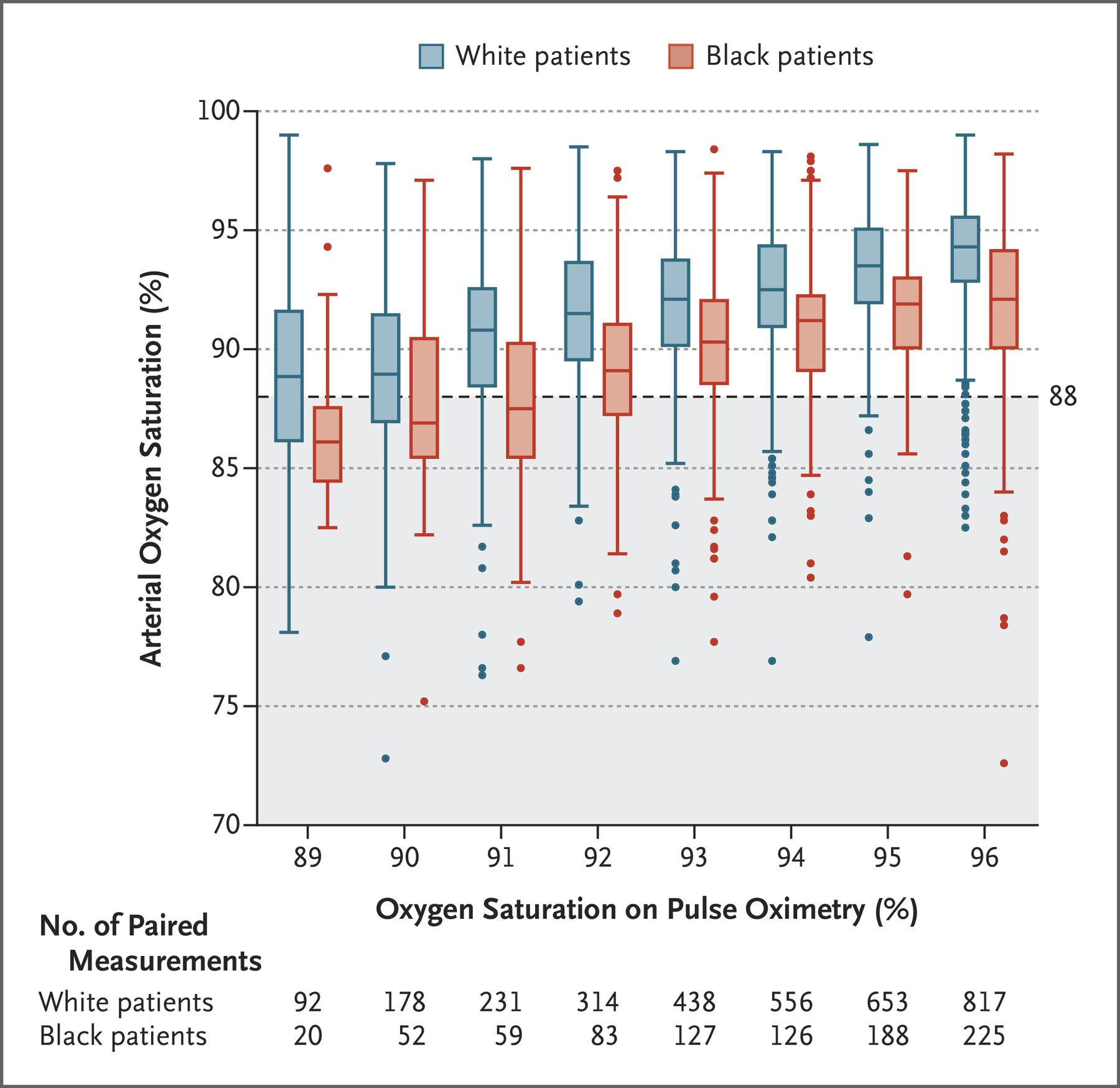

The methodology of the research was simple. The researchers compared the blood oxygen levels given by a pulse oximeter compared to that measured from an arterial blood sample (oxygen levels drawn directly from the blood). They evaluated these readings between a data set of between 8675 white patients and 7618 black patients gathered from University of Michigan Hospital and 178 other hospitals. Then the researchers paired those patients with the same pulse oximetry readings and analyzed their arterial oxygen levels. This analysis is summarized by the box plot in Fig 1.) on the bottom.

The bottom table of Fig 1.) indicates the patients with the same blood oxygen level measured by the pulse oximeter. The dashed line signifies 88% blood oxygen levels, below which, a patient is diagnosed with occult hypoxemia or severe lack of blood oxygen. As Fig 1.) illustrates, black patients on average had a lower arterial blood oxygen level compared to white patients with the same oxygen level measured by a pulse oximeter. Controlling for factors such as diabetes or lung disease, this means that black patients had a 3x more chance of having occult hypoxemia unreported by pulse oximeters compared to their white counterparts.

The cause of this racial bias is simple. Pulse oximeters use the absorption and reflection of light to measure blood oxygen levels which is easily affected by darker skin tones. However, this error can be mitigated by proper calibration using a racially diverse sample group. This research paper indicates that the pulse oximeters used throughout medicine are not calibrated to darker skin tone and in fact, are inaccurate when used on darker skin tones.

This raises a distressing question of how many excess deaths of patients of colour were caused by inaccurate pulse oximetry readings- especially during the 2019 Coronavirus Pandemic. The exact answer may never be known, yet this case demonstrates how we should be more skeptical not only about future technologies but also the present technologies that we use to save lives day-to-day.[3]

Can algorithms allocate fair care for all?

Though not prevalent in the UK, medical algorithms are used extensively in the US to allocate healthcare to patients. Nearly 200 millions patients in America, around 2/3rds of the population, rely on algorithms to give the necessary care that they require. These algorithms work by processing a patients health data to give them a risk score between 1-100. The higher the risk score, the more care, such as routine checkups, are given to the patient. Algorithms such as these are used extensively in the US healthcare system because they are believed to ensure improved outcomes for patients whilst minimizing costs. Due to their apparent efficiency at reducing costs, governments outside the US around the world are considering or have implemented such systems into their own healthcare systems as healthcare become a burgeoning problem in the developed world.

However are these algorithms fair? Do they actually allocate care to those who needed it the most? Similar to the previous case, only in 2019, did researchers investigated the question of whether these algorithms are fair, especially towards people of color. These researchers decided to test one of these commercial health algorithms against a dataset of 6079 patients who identified as black and 43,539 patients who identified as white to test for racial bias.

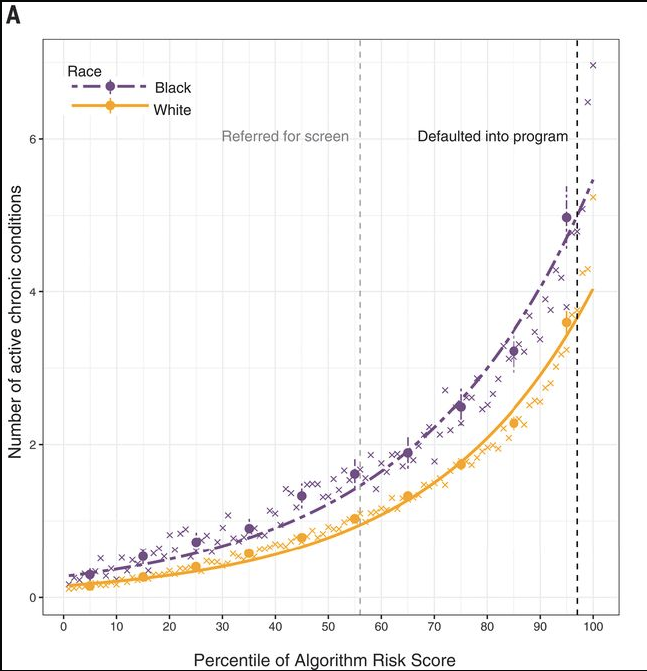

As illustrated in Fig 2.), they discovered that these algorithms give a lower risk score for black patients compared to white patients with the same amount of active chronic conditions. This bias is repeated when the researchers tested the algorithm's risk score against indicators for specific conditions such as diabetes, heart disease or kidney failure. In all cases, black patients were given a lower risk score compared to white patients with an equivalent medical health. As highlighted in the paper, this bias would lead to black patients receiving less care than their white peers and thus, causing an excess mortality amongst black patients.

Through careful analysis, the researchers figured that is algorithmic bias is caused by healthcare cost. The algorithm correlates cost spent on healthcare with higher risk factor. Due to systemic issues such as skepticism to the medical establishment, black patients spend $1801 less on healthcare than white patients. Consequently, the algorithms believes that as black patients spend less on healthcare, they must have a lower risk of needing care.

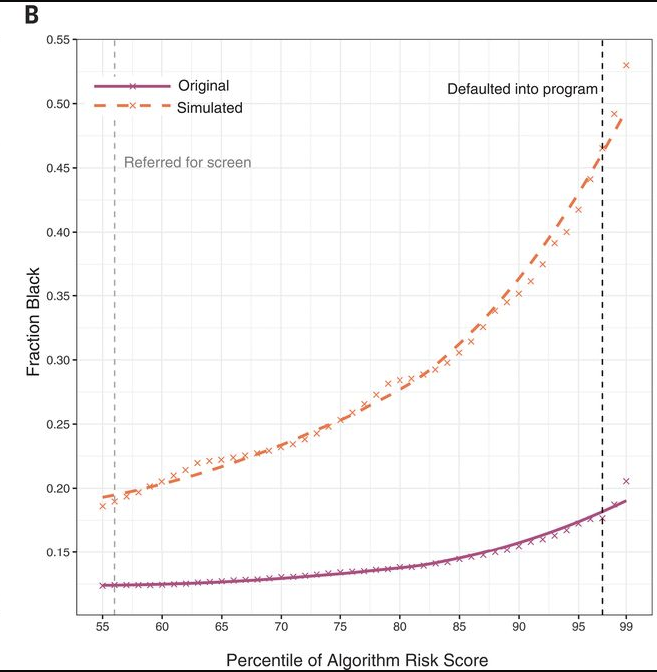

In fact, the researchers modified algorithm to remove medical cost a variable that the algorithm uses. Fig 3.) shows the fraction of black patients at a given risk score between the original algorithm and the simulated algorithm that removes the bias. The bottom axis starts at 55 as over a risk score of 55, a patient is referred to healthcare specialist. As the figure illustrates, a greater proportion of black patients are given a higher risk score in the simulated algorithm compared to the original algorithm thus leading to a more equitable outcome to all.

This case characterizes how we should design algorithms, not only in the medical space, carefully when dealing with populations. The designers of the original algorithm were more than likely unaware of the racial bias caused using medical costs as an input for their algorithm. These types of biases are deadly to groups already at the marginalized edges of society and thus greater scrutiny is needed when testing these algorithms. Much like Pulse Oximeters, this is easily done by forcing the makers of these algorithms to test their algorithms on racially diverse sample before being deployed on general populations. However, more subtlety, the designers of these algorithms must come from these marginalized groups themselves who can raise those questions of bias and thorough testing at the design table.[4]

Can technology tackle medical misinformation?

The 2019 Coronavirus Pandemic highlighted how quickly and extensively medical information could spread in our interconnected world. As seen during the pandemic, medical misinformation is particularly dangerous as it leads patients to not seek medical care such as vaccinations which puts even more patients, such as the immunocompromised, at further risk. This misinformation is spread through social media networks and its up to social media companies to filter misinformation and prevent it from harming us. However, the question begs whether these digital filter are effectivity at their job and more importantly, filter regardless of language, grammar or other quirks of human writing. Most text-based filters on social media platforms rely on some machine learning model, such as support vector machines, to filter out misinformation. Like all statistical models, these models are first trained on datasets that identified either being misinformation or being the truth. They then use this data to predict whether new information is similarly misinformation or the truth. A group of researchers decide to test different machine learning models on 20,000 tweets gathered during the 2009 Flu Pandemic and subsequent conversations around the flu. Different to other papers, the researchers decided to split the dataset between Standard American English (SAE) and African American Vernacular English (AAVE) which is a dialect of english used prevalent by the African-American community. The researchers tested particularly if different machine learning models would filter misinformation differently based type of dialects used in the datasets. Whilst some models were highly effective at filtering out misinformation in both dialects, with a difference of 1% between false negatives rate, other models consistently let misinformation written in AAVE through compared to SAE with a difference of nearly 35% between the false negative rates between the two samples. Reinforced by similar studies indicating how AI filters let misinformation in other languages through, the evidence highlights how biases in technology can inadvertently target ethnic minorities and other marginalized groups. With regards to medical misinformation, this is especially dangerous as it can lead to minority groups avoiding medical care such as vaccinations or cancer treatment and consequently, leading to excess death.How to make the future fair to all?

Throughout this piece, we've seen how both present and past medical technologies have been biased against marginalized people of colour. This bias leads fatal consequences for those already at the edges of society where don't get the right medical care they need and are more targeted by medical misinformation. Throughout the studies listed here, the common link is that the samples used to train and design these technologies have all been biased. They fail to take into account the diversity of populations and assume populations are one homogeneous group. Due to this, they become biased against those groups who are unique compared to the rest. The most apparent conclusion is to be thorough when testing and designing medical technologies where bias is eliminated before being widely used. This, as all cases made evident, is done by using diverse datasets where everyone in society is tested against and designed around. Equally as important, as the pulse oximetry case showed, existing practices and technologies should all be tested for bias before we continue using them any further. More subtlety, the statisticians, designers, medical practitioners create and use these innovations should also come from a varied and diverse background. Black scientists are underrepresented throughout academic circles along with other ethnic minorities thus consequently, issues such as racial bias in medical statistics are not raised as equally as enough. By making both designers and samples come from a diverse background, we can ensure that the future of medicine is equal to all.[1][2]

Sources

1.) Knight H., Deeny S., et al., 2021, Challenging racism in the use of health data, Volume 3 Issue 3, Lancet Digital Health.

2.) Noor P., 2020, Can we trust AI not to further embed racial bias and prejudice?, Racism in Medicine, BMJ.

3.) Sjoding M.W et al, 2020, Racial Bias in Pulse Oximetry Measurement, Correspondence, The New England Journal of Medicine, 383:2477-2478.

4.) Ziad Obermeyer et al., 2019, Dissecting racial bias in an algorithm used to manage the health of populations, Science, pp.366:447-453.

5.) Lwowski et al., April 2021, The risk of racial bias while tracking influenza-related content on social media using machine learning, Journal of the American Medical Informatics Association, Volume 28, Issue 4, Pages 839–849.