Shaping AI team Publishes a report evaluating AI research controversies

Shaping AI UK team publishes report on their recent expert workshop evaluating AI controversies (2012-2022)

What features of AI have triggered controversy among experts during the last 10 years? Academics in the Centre for Interdisciplinary Methodologies (University of Warwick) have published a workshop report outlining provisional results of the on-going ESRC-funded research project Shaping AI which investigates recent debates around AI in four countries (the UK, Germany, France and Canada).

- As part of the ongoing Shaping AI project, academics at the University of Warwick conducted an expert consultation and hosted a workshop with UK-based AI experts to examine what they perceive to be the most important but possibly overlooked controversies in AI.

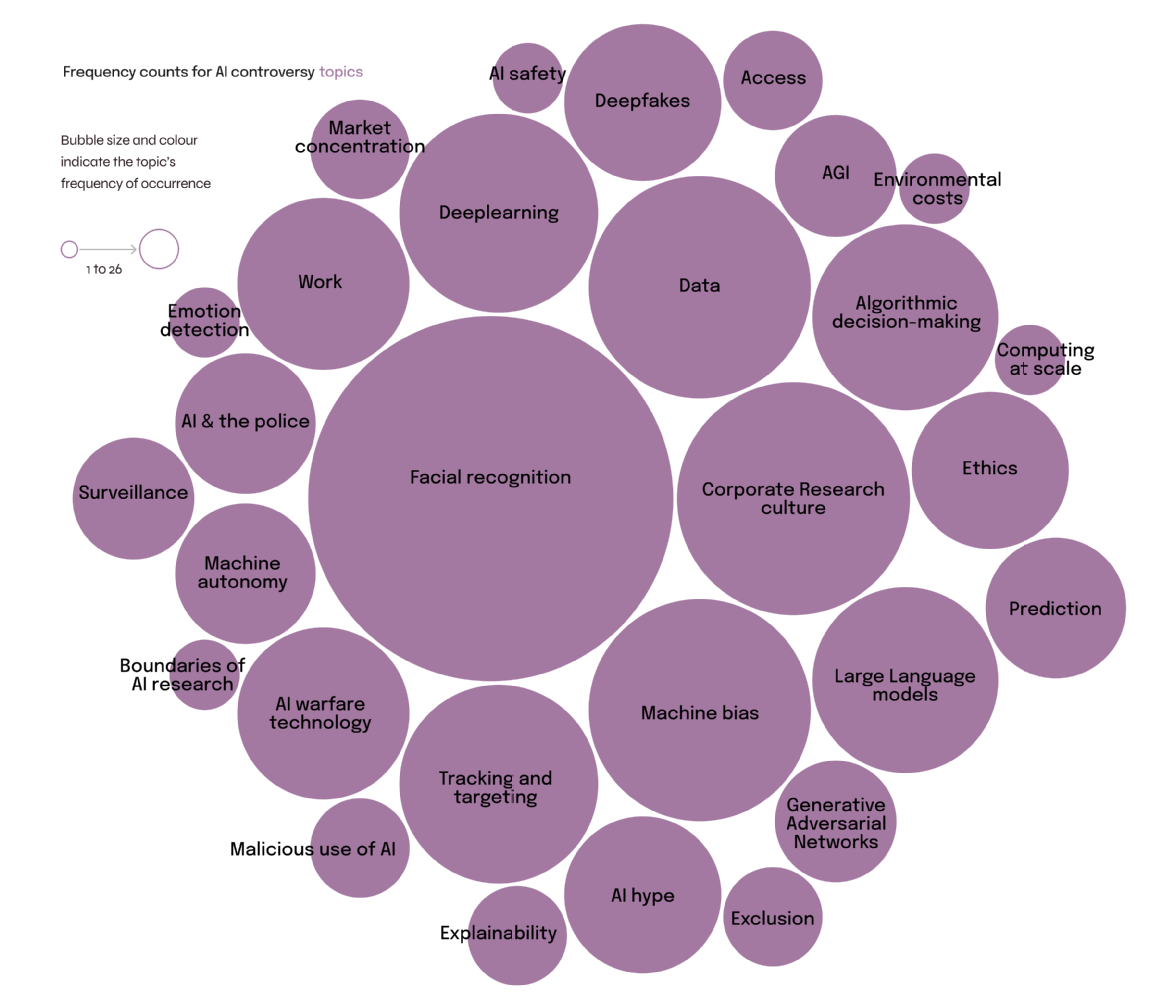

- One of the main findings of this on-going research is that the most controversial developments identified by UK experts concern the technical architecture of general purpose AI: lack of transparency, misinformation, machine bias, data appropriation without consent, worker exploitation and the high environmental costs associated with the large models that define AI today.

- The consulted academics say that frameworks for the regulation of AI should address the lack of oversight over the data and methods used to create AI, rather than only focusing on its application in specific areas (health, mobility, education and so on) alone.

Shaping AI is an international social science research project that examines public debates about artificial intelligence in four countries across a ten-year period (2012-2022). Academics at the University of Warwick are examining research controversies in AI and analysing expert perceptions in the UK, in collaboration with partners undertaking similar research in North America and Europe.

The University of Warwick team has held a consultation with 70 UK experts in AI and in "AI and society" about what they perceive to be the most important and most overlooked controversies in AI. The consultation identified facial recognition technology as a major area of concern, and its application in society, for example, its use in schools and by the police. However, the most controversial developments identified by UK experts concern the technical architecture of general purpose AI.

During a recent workshop, the University of Warwick researchers presented the results of this consultation and an analysis of the main AI controversies identified, and worked with 40 experts to evaluate the findings and discuss what society should be most concerned about in the years to come.

During the workshop, participants said that people should be most concerned about lack of public knowledge and oversight around the origins of the data AI is trained on; for example, where the data comes from and whether consent has been obtained to use that data, and about the risks associated with the concentration of control over critical infrastructure in society in a small number of big corporations. They also highlighted the human and environmental costs of training and deploying large AI models like ChatGPT, which relies on large amounts of both freely available and copyrighted data along with inexpensive human labour and is, in addition, highly energy-intensive.

Read the Shaping AI workshop report here.

Figure caption: Frequency counts for AI controversy topics identified in the UK expert consultation, Autumn 2021.

Shaping 21st Century AI: Controversy and Closure in Research, Policy and Media

AI has become the subject of heated debate across domains during the last years. The international research project Shaping AI analyses AI controversies in four countries with the critical objective of identifying what are the most important and possibly overlooked concerns, disputes and problematics that have arisen in relation to AI as a strategic area of research, public policy and societal change during the last ten years.

Read more about the University of Warwick's contribution to Shaping AI here.