Computer Science News

1st Place at Zero Cost NAS Competition

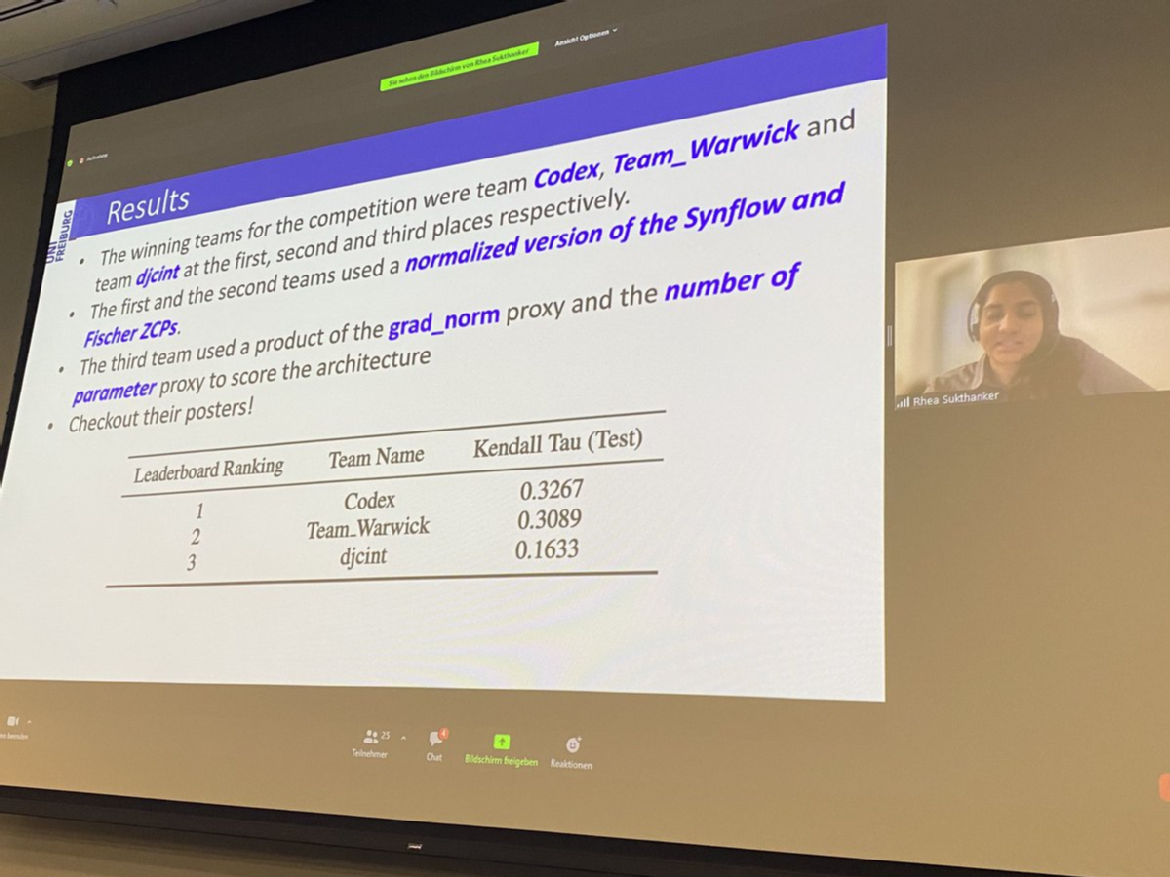

Our teams from Warwick DCS have won both the 1st and 2nd place at the Zero Cost NAS Competition held in conjunction with the AutoML'22 conference.

Over the recent year, Neural Architecture Search (NAS) has attracted a lot of attention. While being able to automate the discovery of better performing neural architectures than hand-crafted ones, it comes at a great price, requiring thousands of GPU hours to perform the search. The Zero Cost NAS competition challenges the participants to design efficient proxies for NAS, using negligible computational resources to evaluate neural architectures.

In collaboration with the AutoCAML team at Samsung AI Cambridge (led by Dr. Hongkai Wen), our research students, Lichuan Xiang and Youyang Sha, proposed new zero-cost NAS metrics that exploit the compressibility of neural networks. Our metrics are extremely efficient to run (reducing search cost from weeks/days to minutes), and achieves impressive results across multiple search spaces and datasets. In the competition, our teams won both the 1st and 2nd places (using different scoring functions), and the performance gap with the 3rd winning team is almost 2x. Checkout our poster here.